AI's black box problem

It works, but how does it work?

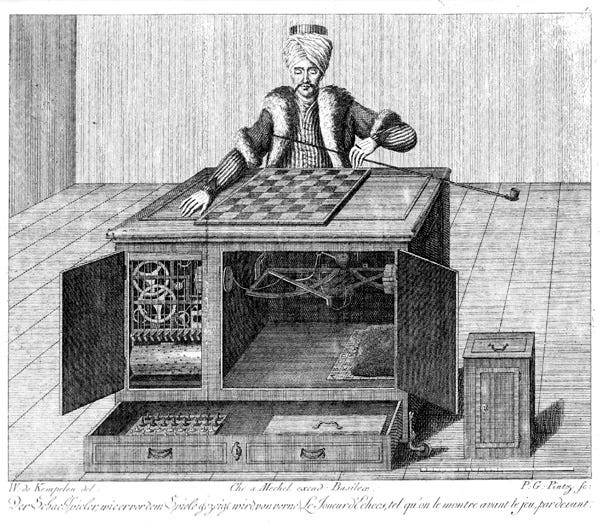

The Mechanical Turk is an example of an early autonomous chess-playing machine. The Turk was a large box upon which sat a character dressed in Turkish finery. The right hand and arm of The Turk rested upon a chess board, and moved the pieces. Several doors of the machine opened to show onlookers a vast series of levers and gears. These doors apparently allowed for viewing the entirety of the complexities of the amazing machine.

Like a horse who can perform long division, it wasn’t so much that The Turk was a great chess player—although The Turk won most of the games played. The novelty was that this array of levers and gears and pulleys was capable of playing chess at all. Indeed, given modern digital computing power, it is only in the last quarter century or so that computers can consistently beat grand masters at chess. How could these levers and pulleys do it?

To the spectators of these chess games, The Turk was a black box. In engineering, a black box is a system whose internal workings are hidden, known only by its inputs and outputs. The box masks, and does not divulge, its inner workings.

The Turk could play chess—and play it well—but onlookers had no idea how it worked. This was not meant to be a magic trick, in which the onlookers are amazed not so much by the thought that the magician is actually performing magic as by the fact that an ingenious mind conceived of an ingenious illusion. The Turk played exhibition games around Europe for a full 84 years, from 1770 until 1854, and was a great mechanical chess player, not an illusion.

Well, that’s not really true, because The Turk did not play chess against anyone. In reality, a small human operator, moving pieces with magnets and levers, secreted within the bowels of The Turk, actually played the games. The Turk was not a true black box, because someone did know how it operated.

When people saw The Turk, they witnessed a machine which appeared to work mechanically but was controlled by a human.

With AI, we have the opposite situation. It appears that an intelligent agent is controlling AI, but this time the box really is empty.

And, like The Turk, we can only look on in amazement.

I have had occasion to consider this because of a recent conversion project of a large computer program from an old outdated language to a modern language. This has been accomplished primarily using the AI program Claude, from Anthropic, to do the conversion. This conversion project has been contemplated for several years, but it was never practical before, because the time involved was too great. The conversion without using AI would take between 2 and 3 years to complete. With AI, the conversion will take less than six months. That’s a savings and an efficiency that can’t be passed up.

But that savings and efficiency comes at a price.

The original program was written almost entirely by me (excepting things like external libraries, which are basically blocks of code or functionality that can be dropped into a program). Since I wrote nearly every line of the program, I know what nearly every line of the program does. If something doesn’t work, it’s fairly easy to pin down where and why the problem is happening and fix it.

With Claude writing (or really converting) the code, the process is very different. Mostly, everything works from the start. The AI makes a few mistakes here and there, including some rather dumb errors that a human wouldn’t make. But, these issues are flagged by the computer as needing attention and they are easy to fix. Mainly I fix them by telling Claude which lines give an error and then he, I mean it, writes the code to fix it.

However, since in general the code is written very quickly and very accurately, I’m not looking over every line. A Windows-based program usually consists of a series of forms—think data entry screens or screens to display previously entered data. Often, Claude will write a form that works “right out of the box” and I can either barely review it or not review it at all.

But I have noticed that occasionally Claude has a hard time getting something right. It may try time and again but just can’t fix something. At that point, a human programmer (me) has to wade into the code and try to get it working. While having AI write the code is at least 100 times faster than writing it myself, debugging a problem actually takes longer for AI generated code. That’s because, not having written the code myself, I have less understanding of how it works. But debug the code, I must understand it. That’s not always easy because AI doesn’t necessarily write code the way I would.

But the issue is, the farther the programmer is abstracted from writing the program code, the less the programmer becomes able to fix it.

In truth programmers have for a long time been a good distance away from the program code. This is because computers only speak binary—1s and 0s—while people don’t speak binary well at all. These 1s and 0s are called machine language, and in the beginning days of computing, it was possible to program using 1s and 0s, sometimes represented as lights turned on or off.

It is traditional for a beginner’s first program to display “Hello, world!” on the computer screen. By the time the computer runs this, it is a series of 1s and 0s which are meaningful to the computer processor but essentially unintelligible to humans. Slightly abstracted from that, the program can be viewed more easily in hexadecimal (base 16). Displaying “Hello, world!” might look something like this in hexadecimal:

BA 0E 01 B4 09 CD 21 B8 00 4C CD 21 48 65 6C 6C 6F 2C 20 77 6F 72 6C 64 21 24.

That’s better than reading binary data, but it’s still rather meaningless. For this reason, assembly language was created. Assembly language is the lowest level of programming that is truly meaningful to humans. In assembly language, the above hex code looks something like this:

mov dx, offset message

mov ah, 09h

int 21h

mov ax, 4C00h

int 21h

message db ‘Hello, world!$’

Assembly language is human-readable, but still very low level. Assembly works directly with the memory and processor of the computer. For example, in the above code, dx and ax are parts of the processor called registers. int 21h triggers a “software interrupt” which transfers control to the operating system to perform a particular action.

Moving from assembler to a higher-level language such as Python, displaying “Hello, world!” becomes:

print(“Hello, world!”)

That’s it. No worrying about binary, hex, or computer registers or memory locations. But, when print(“Hello, world!”) runs it still must eventually be translated back into 1s and 0s, because that’s what the computer understands. The tree of abstraction goes higher and higher, but the roots still go down to the very bottom.

But here’s the thing with AI writing programs. With AI, instead of using Python to write a program that displays “Hello, world!” on the screen, all you really need to do is tell AI to write a program which displays “Hello, world!” As long as the program that AI writes works (and it will work) the programmer no longer even needs to know the language in which the program is written. It works, and that’s enough.

Of course, you could tell AI to do far more than display a message on a computer. You could, for example, tell it to write an accounting program. Or maybe write the internal software for a car. Maybe someday you could tell it to write air traffic control software for an entire nation. And all these systems might work beautifully.

As you traverse from binary to hex to assembler to Python to AI, at every level productivity is increased by orders of magnitude. But the distance from what is actually happening is increasing also. And going from Python to AI seems like the largest leap of them all. The productivity gains are immense, but so is the distance.

That’s where the black box of the Mechanical Turk comes in.

As time goes on and AI writes more and more automated systems, it won’t really be possible for people to understand fully how they work. That’s because figuring out how it works will simply take too much time. Suppose AI writes a complex system in a week, but it would take human programmers months to go through it gain a good understanding of it. Who will pay the money and take the efficiency hit of waiting for humans to go through it before deploying the new system? If AI can write a complex system in virtually no time but you wait for the humans to look it over, you’ve lost out on much of the benefit of AI.

But why would you have human programmers review the code when AI could review it? If Claude writes a system, you could have Claude check it over for mistakes. Or if you prefer, you could have ChatGPT or Grok review the code. That way, instead of taking months, you’ll be done before lunch.

The program I am working on isn’t a matter of life and death. But AI will certainly be writing systems that are. In fact, the AI system will likely be completed faster and be deployed sooner. AI will be able to write systems that are incredibly efficient and quickly operational, but are so complex that they are mostly incomprehensible to humans. So what happens if they stop working? That’s easy, AI fixes them. But what if AI can’t fix them? There’s the real problem, because at this point no human will have a good understanding of these systems. Programmers could become like the Maytag repairman, sitting around waiting for an AI failure. But once called, will they be able to fix anything?

When people talk about AI taking over, this is what I think of. AI won’t suddenly decide that humans are useless and get rid of them. Rather, people will start depending on AI so heavily that they can’t do without AI, and only AI will be able to modify AI.

If AI is in complete charge of the systems we rely on every day, then in a real way it has taken over, because it will set the parameters in which we operate. And like people watching The Turk, we won’t know what’s really going on.